OpenAI Model Solves Six of Ten Unpublished Math Problems

Experts Confirm 6 OpenAI Proofs But Debate the Rest

One day after the decryption of official solutions in the First Proof challenge, mathematicians have begun offering detailed assessments of the proofs submitted by an OpenAI model still in training. While six of the ten attempts have gained broad acceptance as correct, the remaining four have sparked debate over completeness, elegance, and genuine novelty.

The measured response reflects the careful nature of the mathematical community, where claims require thorough scrutiny before acceptance.

Recap of the First Proof Challenge

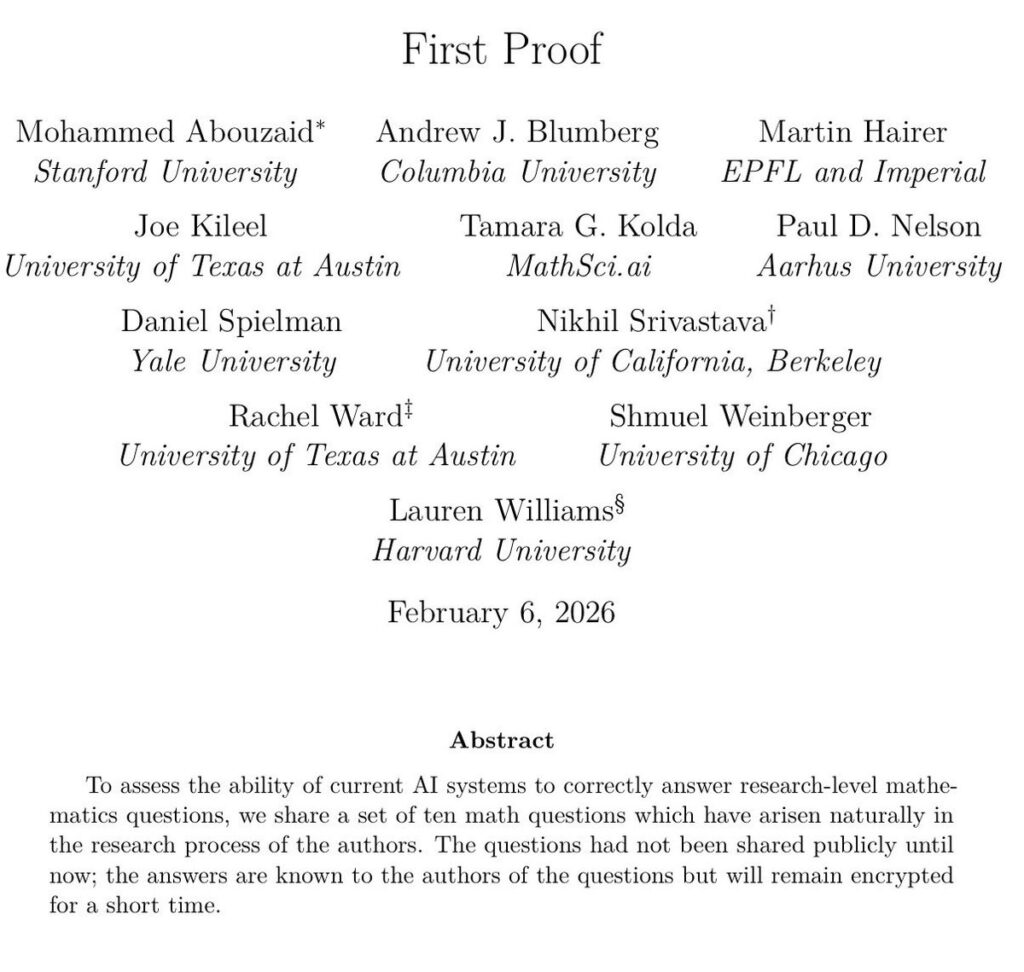

Launched on February 5, 2026, the First Proof challenge presented ten unsolved research problems contributed by eleven mathematicians working in advanced fields. The problems came from active research programs in areas such as stochastic analysis, representation theory, and spectral graph theory. Each had a known proof of approximately five pages, but none had ever been published or posted online.

The official solutions remained encrypted until February 13, providing a narrow window for any system to attempt solutions without risk of having seen similar material in training data. The design aimed to address a persistent criticism of AI benchmarks: that strong performance might reflect memorization rather than reasoning.

OpenAI confirmed it ran its internal model throughout the week, with human involvement limited to formatting and selecting the best outputs.

Initial Confirmations and Points of Debate

As of February 14, specialists in the relevant subfields have signaled high confidence in six of the OpenAI submissions. These proofs align closely with the newly released official versions in structure and key insights.

The four remaining attempts have received more qualified feedback. Two contain promising partial results and correct intermediate steps, yet fail to close the argument fully. The other two deviate in ways that experts have described as clear errors.

One mathematician familiar with the challenge noted that some successful proofs follow relatively direct paths once the central idea is identified. Another observed that certain partial attempts display creative approaches, even if incomplete.

Independent researchers who tested their own systems during the challenge period reported similar outcomes: six full matches, two partial successes, and two failures.

Why These Problems Matter

Research level mathematics differs markedly from competition problems. International Mathematical Olympiad questions, while difficult, follow established styles and reward intense preparation. In contrast, the First Proof problems emerged from ongoing investigations, requiring not only technical skill but also the ability to navigate uncharted territory.

A five page proof in these areas typically involves layered arguments, subtle lemmas, and careful handling of edge cases. Success demands understanding the motivation behind each step, something human mathematicians develop through years of immersion in the literature and open questions.

The fields represented span pure mathematics with applications across science. Stochastic analysis underpins models in finance and physics. Representation theory connects algebra to symmetry in quantum mechanics. Spectral graph theory informs network analysis and computer science algorithms.

Very excited about the "First Proof" challenge. I believe novel frontier research is perhaps the most important way to evaluate capabilities of the next generation of AI models.

— Jakub Pachocki (@merettm) February 14, 2026

We have run our internal model with limited human supervision on the ten proposed problems. The…

Historical Context of AI in Mathematics

Artificial intelligence has advanced steadily in mathematical domains, though gaps remain evident.

Early systems handled computation well but faltered on conceptual proof construction. The breakthrough came with tools like Lean, a proof assistant that allows formal verification of theorems. More recent large language models integrated with search and verification components have pushed performance further.

Google DeepMind’s AlphaProof system achieved silver medal performance at the 2024 International Mathematical Olympiad, solving four of six problems. OpenAI’s o1 series demonstrated strong results on graduate level benchmarks and competition archives.

Those successes, however, drew on problems that existed publicly for years or decades. The First Proof challenge removed that variable by using entirely new material.

The Verification Process

Mathematical correctness is not always immediate to establish. Unlike programming code that can be run and tested, proofs must withstand careful reading for logical gaps, undefined terms, or overlooked cases.

Domain experts are now examining each OpenAI submission line by line. Some have shared preliminary comments anonymously, emphasizing the need for patience. Full consensus may take weeks, particularly for the borderline cases.

The challenge organizers have encouraged open discussion, and the public availability of both official proofs and AI attempts facilitates community review.

Broader Implications for Research

If six proofs hold up under sustained scrutiny, the result would demonstrate that current frontier models can contribute meaningfully to active research areas. Tools capable of generating correct proofs for unseen problems could assist human mathematicians by exploring branches or checking conjectures.

At the same time, the incomplete attempts reveal persistent limitations. Formal mathematics requires absolute precision, and models still sometimes pursue fruitful looking paths that ultimately lead nowhere or miss necessary rigor.

The exercise also highlights the value of tailored evaluations. Standard benchmarks have improved rapidly, prompting researchers to create fresh tests that probe specific capabilities.

International Dimensions

The eleven contributing mathematicians come from institutions across North America, Europe, and Asia, reflecting the global nature of mathematical research. Early reviewers similarly span multiple countries, ensuring diverse perspectives on the submissions.

Advances in AI reasoning carry implications beyond mathematics. Fields that rely on rigorous proof, from theoretical computer science to parts of physics, could benefit from accelerated exploration of ideas.

Path Forward

The coming weeks will bring clearer verdicts as more specialists complete their readings. Whatever the final count of accepted proofs, the First Proof challenge has already provided valuable data on current AI capabilities in a controlled setting.

Researchers at other organizations have expressed interest in similar private or public tests. The combination of encrypted solutions and public release appears to offer a workable model for future evaluations.

For now, the mathematical community continues its deliberate process of assessment. The results, whether ultimately six, seven, or eight confirmed proofs, mark a step forward in understanding how artificial intelligence can partner with human expertise in one of the most demanding intellectual pursuits.

The open availability of all materials ensures that discussion will continue well beyond the initial week, contributing to broader conversations about the role of AI in scientific discovery.